Neil Kempin

10.25.2013

Recently, a good friend showed me how Google Voice improves upon voicemail by doing it’s best to transcribe your message. I was impressed that the ones I had left in the past were perfect. This is really cool, and whats even cooler are the messages from her grandmother who speaks with a fairly thick accent. Naturally the voice recognition software Google uses isn’t going to be able to understand everything and what comes out when the words are continuously unclear sounds like some sort of faulty robot reading a mad lib.

Once we finished laughing at all the bad transcriptions (skipping the good ones) I was longing for more. I thought it might be cool to make a kind of feedback loop where the iterative decay in the line is Google Voice’s misinterpretations of an imperfect voice, say…like the simple built in text to speech software that is accessible via right clicking on text in a mac.

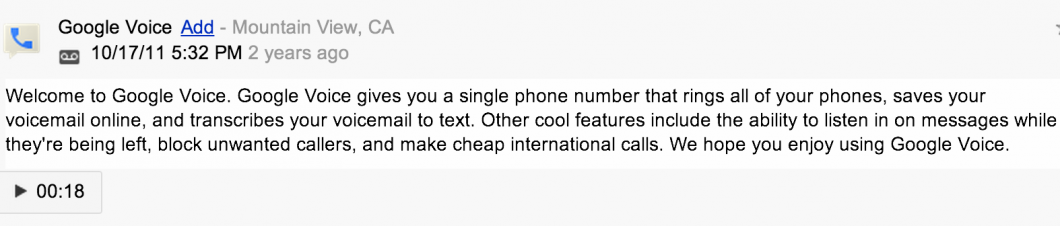

So I called my own google voice number with my cell phone and waited for the voicemail beep then had ‘Alex’ (the main man for mac text-to-speech) recite the google voice welcome message for me. After the message is left google transcribes it to text and then I take the text it writes and record it to another voicemail using the same text-to-speech voice ‘Alex’ over and over.

Original Message:

A quick side note, Alex has a great voice. It’s really clear and he pronounces every single syllable like its absolutely precious. However, the cadence and intonation he uses is really bad, and it sounds like he’s got some type of distant galaxy android heritage (potential descendant of robo-grandpappy Zarvox?). He’s just the guy for the job though, Google Voice has no idea what he’s saying.

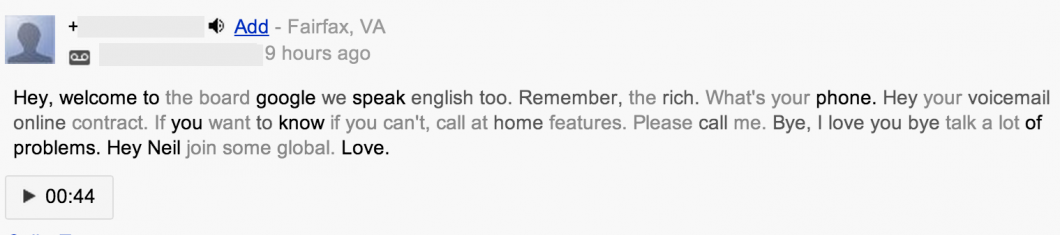

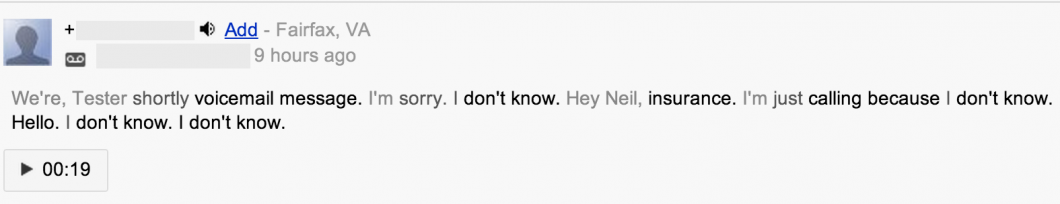

First Transcription:

Fantastic start. I like the use of my name in there, very resourceful. Also, I’m now beginning to notice the word love being thrown in quite often, remembering other bad transcriptions I’ve seen….dangerous…

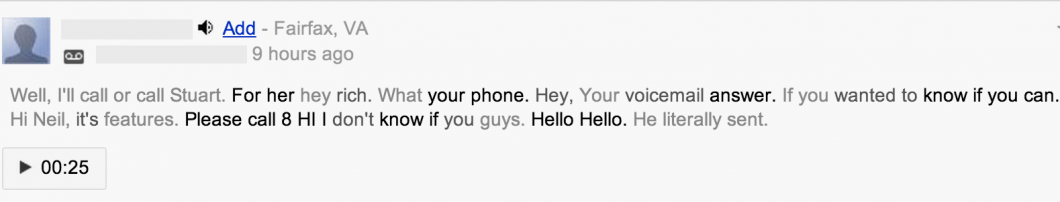

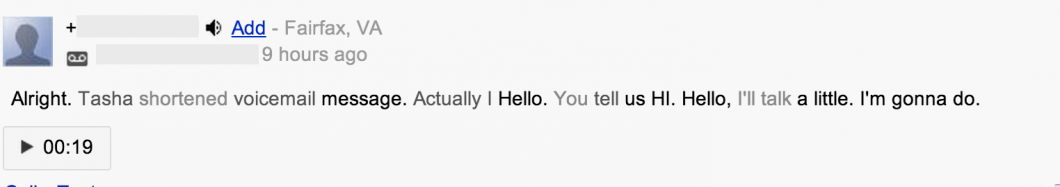

Second Transcription:

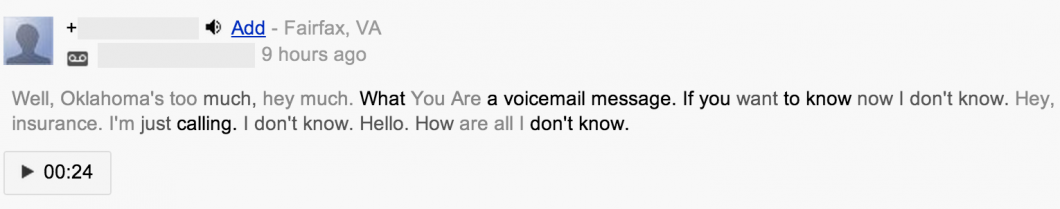

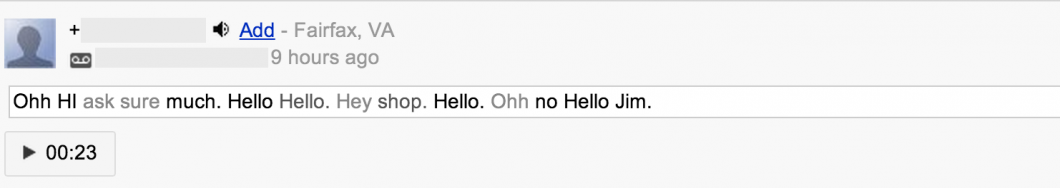

Third Transcription:

I agree about the Oklahoma thing

Fourth Transcription:

Fifth Transcription:

Sixth Transcription:

A friend of mine works down in Mountain View and sent me this photo of what was going on:

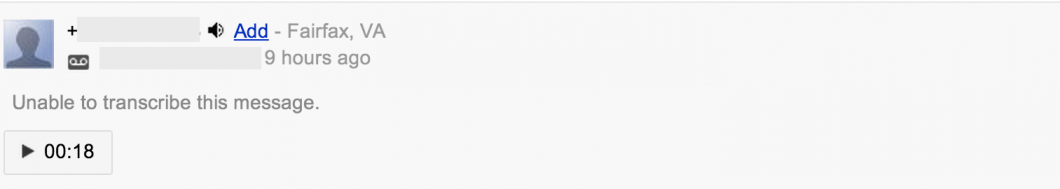

Seventh And Final Transcription:

It has finally given up. I suppose that a larger majority of the process relies on context clues than I had assumed. Context and meaning appears to play a big enough role that a nonsensical enough message can be given up on if there isn’t enough meaning present.

Other Thoughts

I think there are a lot of cool things that can be done with speech recognition engine misunderstandings. The people designing this type of software have to put a lot of work into the analysis of not only sounds and speech patterns but also meaning and context. Meaning and context is not a particularly native ability of the computer and the caveats of it’s implementation can be entertaining. It would be interesting to compare the results of different speech recognition softwares with the same messages and try to find patterns that indicate something about that software’s nature as if it were a living entity. Imagine finding Siri to be unintentionally biased in ways or google voice’s interpreter to be somewhat more aggressive than others.

Of course these conclusions wouldn’t actually mean too much since this is just automatic robotic software in the end, but in a way they are very entertaining. I think it’s fair to say that if a machine or piece of software plays a role in our life (even a minor or behind the scenes role like speech recognition), the pseudo characteristics of it are to some degree relevant and a computer program can be a “real” entity like a living thing playing some part in our lives similar to the way other living things do.

Maybe that “some degree” is just smaller or larger depending on how well you are able to discern just how many roles the software is playing, intended or not.